The Backtest Lie That Every Trader Believes

Here's a scenario that plays out thousands of times a week in trading forums and Discord servers: someone posts a backtest showing 180% annual return, 89% win rate, and virtually no drawdown. The equity curve is a straight line going up. Comments flood in asking "what strategy is this?"

The answer, almost always: it's a lie. Not a deliberate one, necessarily - but a backtest so riddled with methodological errors that it bears no relationship to what the strategy would actually produce in live trading.

This guide explains exactly why most backtests are wrong, what the common mistakes look like in practice, and how to run a backtest that actually predicts future performance.

Why Most Backtests Lie

The gap between a backtest and live performance comes from specific, identifiable errors. Here are the four that cause the most damage.

1. Overfitting (Curve-Fitting)

Overfitting happens when you optimize your strategy so thoroughly to historical data that it "memorizes" the past instead of learning from it. A strategy with 15 parameters, all fine-tuned to the exact data they were tested on, will produce spectacular backtest results - and fail immediately when the market moves slightly differently.

The classic example: you test a moving average crossover strategy on 2022-2024 data and try 500 different MA period combinations. You find one that produced 240% return with low drawdown. The problem: if you tested 500 combinations, you didn't find a strategy - you found a lottery ticket that already won. Those MA periods happened to align with trend reversals in that specific two-year window. On the next two years of data, it will be random noise.

How to detect it: run your optimized parameters on a hold-out sample (data you didn't optimize on). If performance degrades by more than 40-50%, you're curve-fitted. If it holds up within a reasonable range, you may have a real edge.

The rule of thumb from quantitative research: constrain to 3-5 free parameters maximum. Every additional parameter you optimize increases overfitting risk exponentially. Require that the edge survives a plus/minus 10% perturbation of each parameter - if adjusting your 14-period RSI to 13 or 15 completely collapses results, the edge is fragile.

2. Survivorship Bias

When you backtest on current market assets, you're only looking at the ones that survived to today. In stock trading, this means you're testing on companies that didn't go bankrupt, didn't delist, and didn't merge - which creates a systematic upward bias. The companies that would have hurt your strategy most are invisible in your data.

For crypto, this is particularly acute. Testing a "buy all top-20 crypto by market cap" strategy using today's top-20 means you're backtesting with the benefit of knowing which coins became top-20. In 2019, that list included IOTA, Dash, and NEM - assets that are down 90%+ from those prices. A strategy tested on 2019 data using 2026's top-20 looks dramatically better than reality.

For gold (XAUUSD), survivorship bias shows up differently. Gold itself doesn't disappear, but the data can lie by omission: brokers that went bust during the 2020 gold spike had the widest spreads and worst fills. If your data comes from a surviving broker with tight spreads, you're backtesting on the best-case execution scenario. During the March 2020 crash, some brokers had XAUUSD spreads of 50+ pips - if your backtest assumes 2 pips throughout, you're living in fantasy land.

3. Ignoring Fees, Spread, and Slippage

This is the error that kills more strategies than any other, particularly for high-frequency and scalping systems.

A gold scalping strategy that targets 5-8 pip moves and wins 70% of the time looks incredible in backtest. Add realistic XAUUSD spread (1.5-2.5 pips on a standard account, higher during news) plus commission (typically $3-5 per lot round-trip) plus slippage during volatile conditions (1-3 pips on gold news events), and the edge can disappear entirely or reverse.

The math: a 5-pip target with 2-pip spread plus 1-pip commission plus 0.5-pip average slippage means your actual net target is 1.5 pips. At 70% win rate with a 2:1 reward/risk ratio, the original edge was substantial. At a 1.5-pip effective target, you're barely above breakeven.

Always run backtests with:

- Realistic spread for your specific broker and account type

- Commission per lot matching your actual fee schedule

- At least 0.5-1 pip slippage built in for entry and exit

- For high-volatility periods (news, open/close), multiply slippage estimates by 3-5x

4. Look-Ahead Bias

Look-ahead bias occurs when your strategy uses information that wouldn't have been available at the time of the trade. Common examples include using the closing price of a candle to decide whether to trade that candle, using daily pivots calculated from today's data for today's trades, or not accounting for the delay between signal generation and order execution.

In automated backtesting platforms, this can be introduced invisibly by coding errors. The result: a backtest that appears to enter at perfect prices that could only have been known after the fact.

Walk-forward testing separates robust strategies from curve-fitted coincidences. Source: Pexels

Key Metrics: What to Measure and What They Mean

Expected Value (EV)

EV is the single most important number in any backtest. It tells you the average outcome per trade, measured in units of risk (R).

EV = (Win Rate x Average Win in R) - (Loss Rate x Average Loss in R)

A strategy with 48% win rate, average win of 2.2R, and average loss of 1.0R produces an EV of:

(0.48 x 2.2) - (0.52 x 1.0) = 1.056 - 0.52 = 0.536R per trade

At $100 risk per trade, that's $53.60 average profit per trade over a large sample. Practical benchmarks:

- EV below 0.3R: Marginal - execution costs will likely eliminate the edge

- EV 0.3-0.7R: Acceptable - viable with low fees and disciplined execution

- EV 0.7-1.5R: Strong edge - worth running live with proper position sizing

- EV above 1.5R: Excellent - verify it's not overfitted

Profit Factor

Profit Factor (PF) is gross profit divided by gross loss across all trades. A PF of 1.74 means the strategy earns $1.74 for every $1 lost. Above 1.5 is the minimum threshold for a strategy worth trading live.

Maximum Drawdown

The largest peak-to-trough decline in the equity curve. Critical for position sizing: if your strategy has a historical max drawdown of 22%, you need to size positions such that a 22% drawdown is psychologically and financially survivable - which for most traders means sizing down significantly from what the backtest "suggests."

Recovery Factor

Net profit divided by maximum drawdown. A recovery factor above 2.0 means the strategy earns at least twice what it risked in its worst drawdown period. Below 1.0 means the strategy hasn't yet recovered from its worst loss - a red flag for long-term viability.

Real Example: BTC Trend-Following Strategy

Here's a real-world case study in the difference between naive backtesting and proper methodology.

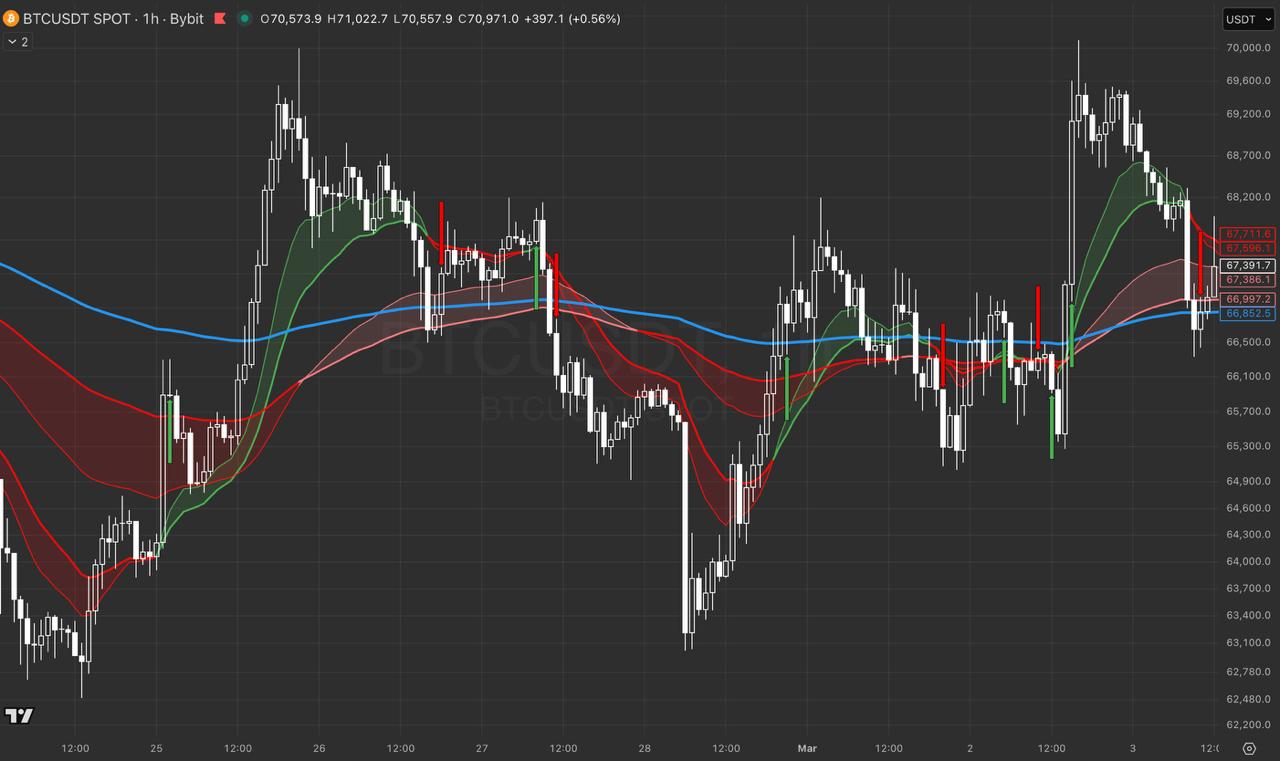

The strategy: BTC 1H trend-following using moving average crossover and momentum confirmation. Tested over 4.1 years on BTCUSDT 1H data.

Initial naive backtest results (no fees, no slippage, in-sample optimized):

- Win Rate: 54.2%

- Average Win: 3.04R

- EV: 1.64R per trade

- Profit Factor: 3.2

Looks impressive. So we applied proper methodology:

Step 1: Added realistic exchange fees (0.1% per side on spot, equivalent to 0.2% round-trip). Knocked EV down to 1.38R.

Step 2: Added realistic slippage (0.1-0.3% per fill during normal conditions, higher during news). EV dropped to 1.18R.

Step 3: Walk-forward testing - split the 4.1-year dataset into rolling in-sample and out-of-sample windows. Re-optimized parameters on each in-sample window, tested on the subsequent out-of-sample period. Averaged performance across all out-of-sample windows.

Step 4: Eliminated look-ahead bias by fixing a code error that used close-of-candle prices for signal generation.

Final verified results across 218 trades:

- Win Rate: 48.3%

- Expected Value: 0.80R (vs theoretical 1.64R)

- Profit Factor: 2.61

- Test period: 4.1 years

- Trade count: 218 - statistically meaningful

The EV dropped from 1.64R to 0.80R - a 51% reduction. That's not a rounding error; that's the cost of doing the analysis properly. The strategy still has a genuine edge, but the difference between the naive and rigorous result is the difference between unrealistic expectations and a strategy you can actually run sustainably.

The Mean-Reversion Graveyard

One finding worth calling out explicitly: the same methodology was applied to mean-reversion variants of the BTC strategy across 81+ parameter combinations. RSI-based reversals, Bollinger Band bounces, deviation-from-mean entries - every flavor of "buy the dip" and "sell the rip" was tested. Every single combination produced negative EV after fees and slippage. The best one managed -0.02R per trade.

This is a data-backed claim most crypto content won't make: mean-reversion on BTC does not work. Bitcoin is a trending asset. It trends up, it trends down, and it rewards traders who ride those trends. Betting on "it'll come back" is a losing strategy over hundreds of trades. If your BTC system is built on oversold bounces, test it properly before you find out the hard way.

Walk-Forward Testing: The Gold Standard

Walk-forward testing is the closest you can get to simulating live trading with historical data. Here's how it works:

1. Split the data into rolling windows: For example, with 4 years of data, use 12-month in-sample windows with 3-month out-of-sample windows.

2. Optimize on in-sample, test on out-of-sample: Optimize your parameters on the first 12 months. Test with those parameters on months 13-15 (out-of-sample). Record results.

3. Roll forward: Move the window forward. Now your in-sample is months 2-13, out-of-sample is months 14-16. Optimize again, test again.

4. Aggregate the out-of-sample results: These aggregated results represent your strategy's performance on data it was never optimized to. If it holds up reasonably well, the edge may be genuine.

The key insight from the QuantInsti research on walk-forward optimization: strategies that look good in walk-forward testing consistently outperform strategies validated only on a single in-sample/out-of-sample split. The rolling window approach prevents the single-split approach from accidentally selecting an unusually easy test period.

Platform Matters: MetaTrader's Strategy Tester and Its Limitations

If you're backtesting on MetaTrader 5 - which most forex and gold traders are - understand its Strategy Tester's limitations. The default "1 minute OHLC" modeling mode is adequate for strategies on 1H+ timeframes, but unreliable for scalping or anything below 15M. For scalping EAs, you need "Every tick based on real ticks" mode with high-quality tick data, otherwise your backtest is generating phantom fills at prices that never existed.

MT5's optimizer is powerful but dangerous: it makes it trivially easy to run thousands of parameter combinations and pick the best one. That's exactly the overfitting trap described above. Use the optimizer to identify parameter regions that perform well (a cluster of profitable settings), not individual optimal points. If only one narrow parameter set works and everything around it fails, that's curve-fitting, not edge discovery.

Well-designed automated systems on gold include built-in walk-forward validation in their development methodology so the published backtest results reflect out-of-sample performance, not in-sample optimization peaks.

The Proper Backtesting Checklist

Before calling any backtest valid, confirm all of the following:

- Minimum 3 years of data - ideally 4-5 years including at least one major market shock

- 200+ trades - fewer than 100 trades has no statistical power

- Fees and spread included - use your actual broker's costs, not zero

- Slippage accounted for - minimum 0.5 pip / 0.1% per fill

- Walk-forward validation completed - not just single in/out split

- Look-ahead bias checked - verify signal generation uses only data available at trade time

- Hold-out data preserved - keep at least 20-30% of data for final validation, never touch it during development

- Parameter sensitivity tested - small changes in parameters should not collapse results

- Monte Carlo simulation run - to understand worst-case drawdown scenarios

The Uncomfortable Truth About Most Backtests

Most traders run backtests to confirm a strategy they already believe works. The methodology mistakes above are almost always made in the direction of making results look better. Almost nobody runs a backtest and then makes it more conservative.

The discipline required for valid backtesting - holding out data, accepting lower theoretical returns, testing on multiple regimes - runs counter to the psychological pull of seeing a great equity curve and wanting it to be real.

A backtest that survives proper methodology and still shows EV of 0.80R with a Profit Factor of 2.61 is genuinely valuable. It's less exciting than the 1.64R naive result, but it's a strategy you can actually trade with realistic expectations.

The traders who succeed long-term in systematic trading are the ones who are harder on their backtests than the market will be. The market gets the final vote on whether your edge is real - and it has no interest in sparing your feelings.